The Testing Mistake That Changed Everything

At one global company where I worked, the team was working hard to improve our test-driven culture. They had become obsessed with generating feature test ideas, launching experiments, and sharing learnings across the organization. It felt like we were finally getting scientific about product development, and we had the activity to show for it.

One particularly ambitious project came from our competitive intelligence team, who had been meticulously tracking one of our largest competitor's testing patterns. We were monitoring what tests they ran and whether they would productionalize the results over time. This seemed like the perfect data-driven strategy: be a fast follower of what the industry leader was doing.

Our logic was flawless. Our execution was systematic. But our results were terrible.

For months, the team failed to achieve positive results from tests that our competitor had apparently validated and implemented. They couldn't understand why. This company was an industry leader known for their testing methodologies. We were following their exact approach. The data should have worked.

Then someone made a discovery that changed how I thought about data forever.

Buried in our competitor's quarterly financial filings was a shocking admission: they had identified a critical bug in their testing software that had been showing winners as losers and losers as winners for an unknown amount of time. They were notifying investors that they were working to resolve the issue, going back through past tests, and reconciling the results. Their financial performance would be impacted for several quarters as they fixed the problem.

We had been copying a broken system for all this time.

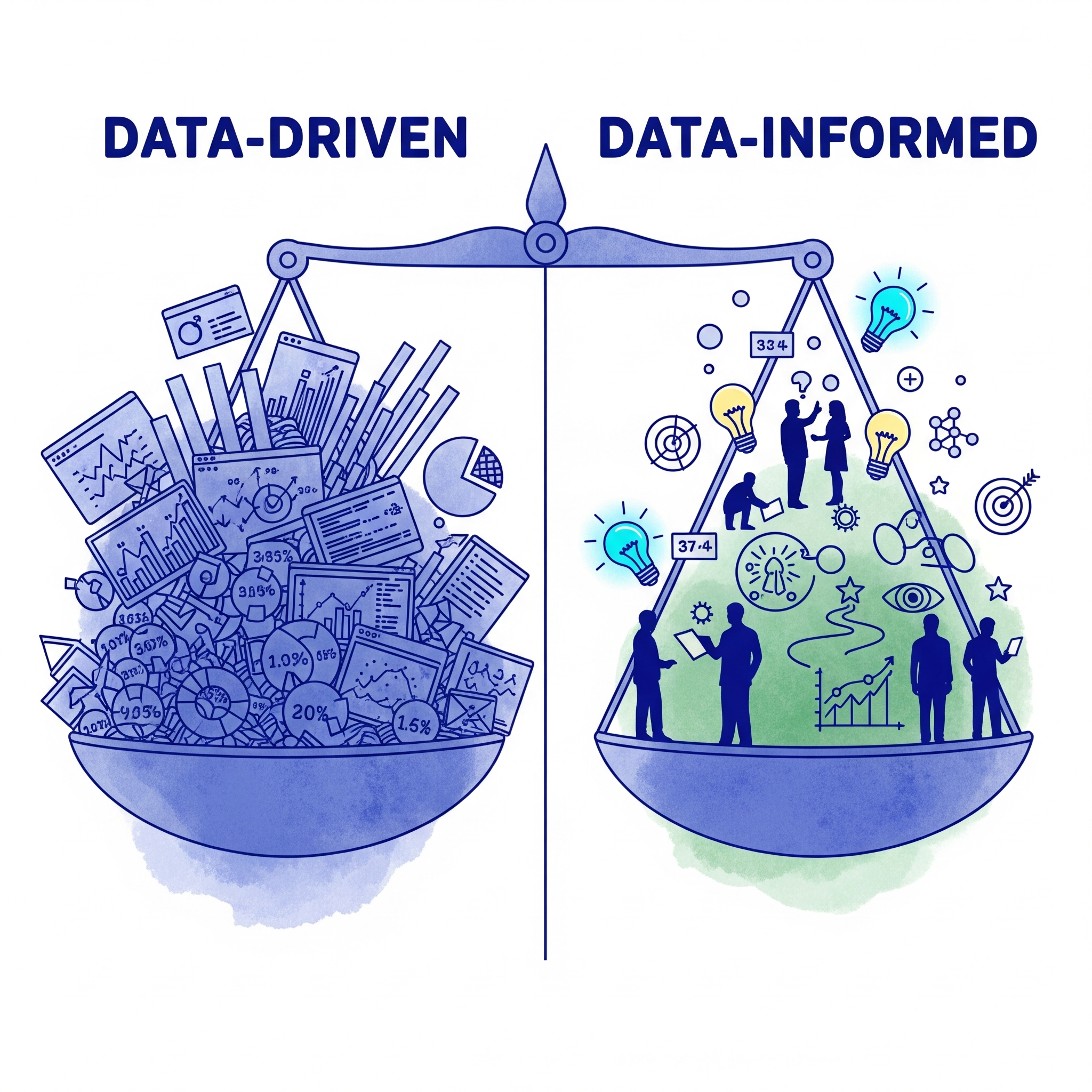

The Trap of "Data-Driven"

We had done everything right by the data-driven playbook. We identified patterns, followed the evidence, executed systematically. And it was all worthless because we never questioned the source.

That's the trap of being data-driven rather than data-informed. When you follow data without questioning its context, you're not being scientific -- you're being blind with extra steps. We had more analytics sophistication than most teams in our industry. We had a dedicated competitive intelligence function. We had rigorous testing processes. None of it mattered because the entire system was built on the assumption that the data we were following was sound.

What Data-Informed Would Have Looked Like

We would have questioned the source before following the signal. If we'd been data-informed rather than data-driven, someone would have asked why our competitor's test results seemed too good to replicate consistently. We would have treated the persistent gap between their reported wins and our actual results as a signal worth investigating, not just a problem with our execution.

We would have trusted the tension instead of dismissing it. There were people on the team who felt something was off. The results we were copying just weren't working, and the explanations for why kept shifting. A data-informed team treats that gut-level discomfort as important information. We treated it as noise and kept following the numbers.

We would have looked at the broader context, not just the metrics. A competitor's testing patterns don't exist in isolation. Their financial performance, their public statements, the consistency of their results over time -- all of that is context that should inform how much weight you give their data. We were so focused on the test-level details that we never stepped back to ask whether the whole picture made sense.

That testing mistake cost us millions in lost opportunities and misdirected resources. But it taught me something worth more: the most dangerous data is the data you never think to question.