When Crisis Becomes Catalyst

I took over a product that had been launched as a side project with no validation or rules, creating a data integrity nightmare with duplicate, conflicting, and incomplete data. My job was to clean up this mess and scale from $5M to $100M in a few months.

Most teams would have seen this as a crisis to manage. But we saw something different: an opportunity to solve a systemic problem that had been plaguing our entire organization.

Instead of just building a validation service for this particular product, we built a service that worked across all data ingest points. This became the foundation for a whole new content management service, helped us clean up our entire data catalog, and positioned us perfectly for a large migration project.

What started as a crisis became the catalyst for building one of our most valuable internal services. We didn't just survive the disruption -- we used it to get stronger.

Don't Just Bounce Back -- Push Forward

What we did with that data integrity nightmare has a name: anti-fragility. We didn't just survive the crisis -- we used it to build something that made the whole organization stronger.

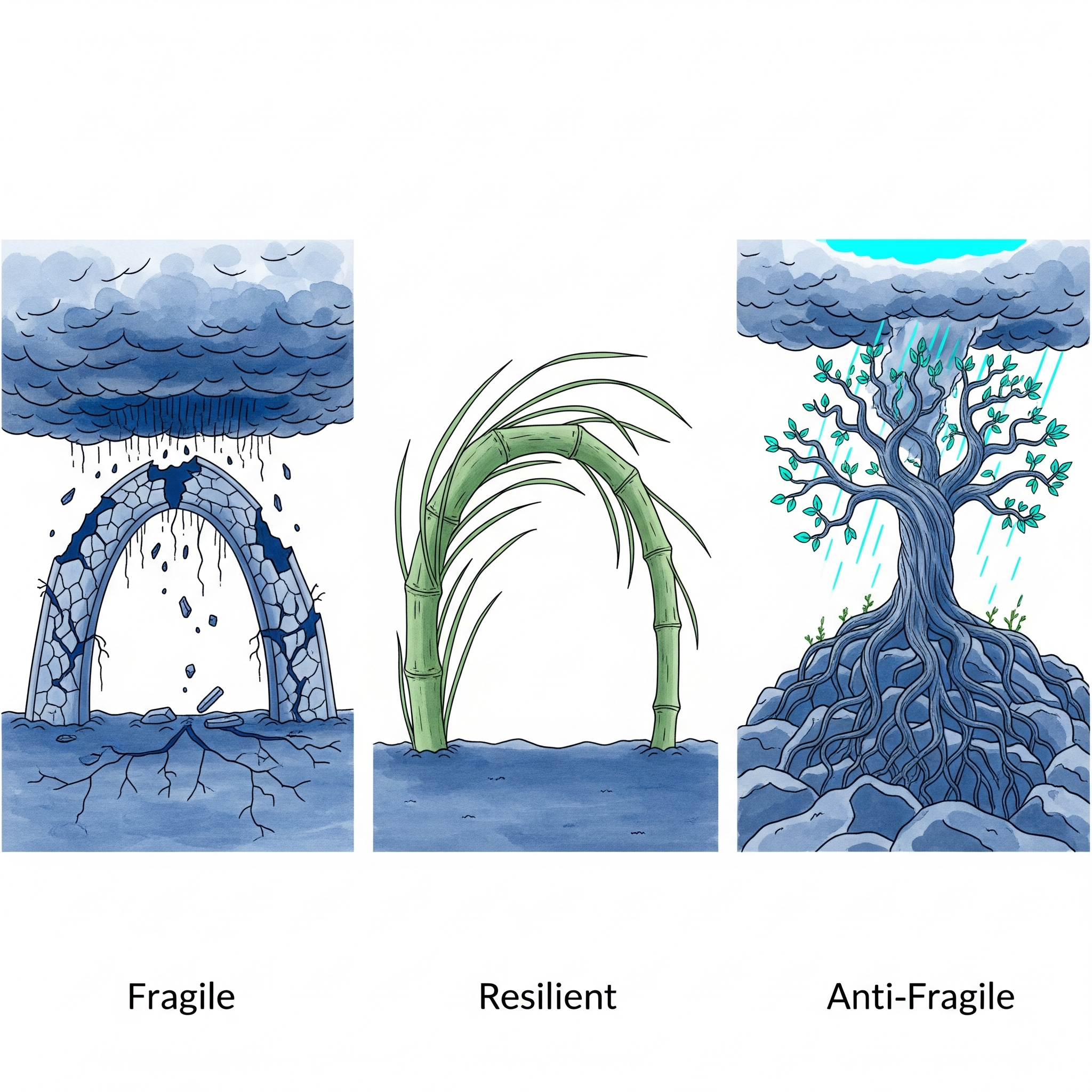

A resilient team would have cleaned up the data and moved on. They would have fixed the duplicates, patched the gaps, and gotten back to the roadmap. That's what most teams do, and it's not a bad outcome. But we went further. We built a validation service that prevented the problem from ever recurring across any product in our catalog. We turned a mess into infrastructure.

That's the difference between resilience and anti-fragility. Resilience means you survive the hit and return to where you were. Anti-fragility means the hit itself makes you stronger than you were before.

What Made That Possible

Looking back, three things allowed us to turn that crisis into a lasting advantage rather than just a recovery story.

We built something bigger than the immediate fix. Our validation service was born from a crisis in one product, but we designed it to work across every data ingest point in the organization. Instead of plugging one hole, we reinforced the entire foundation. When the large migration project came along months later, we were already prepared because the crisis had forced us to build the right infrastructure early.

We let the crisis show us what we'd been missing. The data integrity problems in our product weren't unique -- they existed across the organization. Nobody had seen the full picture because each team was focused on their own silo. The crisis gave us a reason to look broadly, and what we found led to cleaning up our entire data catalog. The chaos was full of information we wouldn't have gathered any other way.

We shifted from "fix the problem" to "build something better." Early on, the team's instinct was to clean up the mess and get back to the growth roadmap as fast as possible. The turning point came when we stopped thinking of the crisis as an interruption and started thinking of it as a design opportunity. That mindset shift -- treating disruption as raw material rather than an obstacle -- is what turned a data nightmare into one of our most valuable internal services.

The Worst Messes, the Best Solutions

I keep coming back to what that experience taught me. We didn't plan to build a company-wide validation service. We didn't have "create foundational data infrastructure" on any roadmap. The crisis handed us a problem so bad that the only real solution was something far more valuable than what we'd lost.

That's the thing about disruption: you can't avoid it. Markets shift, competitors surprise you, internal priorities change overnight. The question isn't whether it will happen -- it's whether your team treats it as something to endure or something to use. The worst messes often contain the seeds of the best solutions. You just have to be willing to look for them.