When AI Gets the Data Right But You Get the Decision Wrong

I remember being part of a team that had early access to some LLM tools. We were excited about using AI to generate content for physical properties in our marketplace. The technology seemed perfect: fast, consistent, and data-driven. We quickly tested a deployment of it and watched as our content generation improved dramatically in speed and volume.

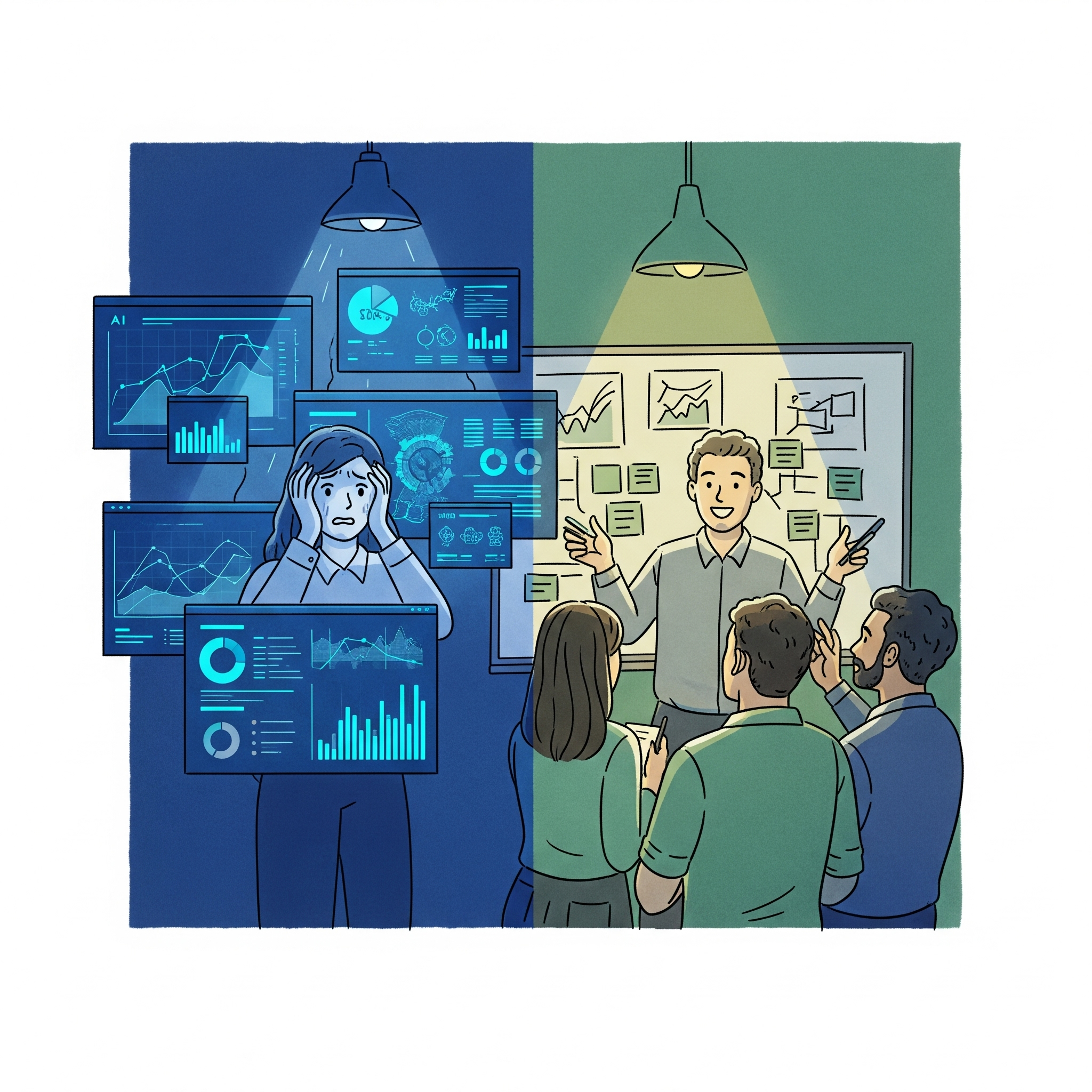

But then we hit a wall. The AI was producing beautifully formatted, grammatically perfect content that was also completely wrong about key product specifications. Items were being mislabeled, features were being hallucinated, and amenities and services were being invented. We tried adding checks and validations, but the content became as generic as our original basic system had been.

We had forgotten that selling physical products requires human judgment about what details matter to real customers, which features to emphasize for different audiences, and how to balance accuracy with compelling marketing. The AI could process infinite data points, but it couldn't understand the subtle human context that makes content actually useful.

That was the first lesson: AI can produce volume, but it can't produce judgment about what actually matters. The second lesson came from watching someone try to shortcut understanding itself.

The Shortcut That Wasn't

I worked with a highly ambitious product manager on my team. She was intelligent, driven, and wanted to excel at product management. When she asked for book recommendations, I suggested one of my favorites, a classic that had shaped my thinking over years of real-world application.

The next day, she came back with questions about the book. I was impressed by her speed until I learned she had only read AI-generated summaries and bullet points. While she could recite the framework basics, she had missed the deeper insights that come from wrestling with complex ideas, understanding the context behind each lesson, and connecting the concepts to real situations.

We spent the next several weeks reading it together, chapter by chapter. As we discussed each section, she began to understand not just what the frameworks were, but why they worked, when they didn't, and how to adapt them to unique situations. The difference between surface knowledge and deep understanding became clear.

Both experiences pointed to the same conclusion: AI is a powerful tool when you bring the judgment. It becomes dangerous when you expect it to supply the judgment for you. Our content tool failed because we outsourced the decisions about what customers care about. The book summaries failed because they outsourced the thinking that makes frameworks actually useful. But it took a third experience to show me what using AI well actually looks like.

What Using AI Well Actually Looks Like

When I tried using AI tools to write a Product Requirements Document, the first attempts were predictably bad. The AI hallucinated features, made wildly optimistic growth projections, and created solutions disconnected from our actual technical constraints and market realities. After the content generation disaster and the book summary shortcut, I recognized the pattern immediately: I was asking AI to do my thinking again.

So I changed my approach. I outlined my own ideas first, then prompted the AI to challenge my assumptions. I guided the iterative refinement while letting it help with research and structure, and I validated everything against real-world constraints. The result was genuinely better than what I would have produced alone -- not because the AI supplied the judgment, but because it helped me sharpen mine.

That's the pattern across all three experiences. The content tool failed because we asked AI to decide what matters to customers. The book summaries failed because they replaced the struggle that builds real understanding. The PRD worked because I kept the judgment and used AI to extend my reach. The more powerful AI becomes, the more human judgment matters -- not less. The tool works when you bring the thinking. It falls apart the moment you hand the thinking over.